OpenAI Accused of Training GPT-4o on Paywalled Content

OpenAI is accused of training GPT-4o on paywalled O'Reilly books without consent. Researchers claim AI models show strong recognition of restricted content.on Apr 03, 2025

.jpg)

OpenAI has been accused of having most probably trained its GPT-4o model using paywalled content, without the publisher's consent.

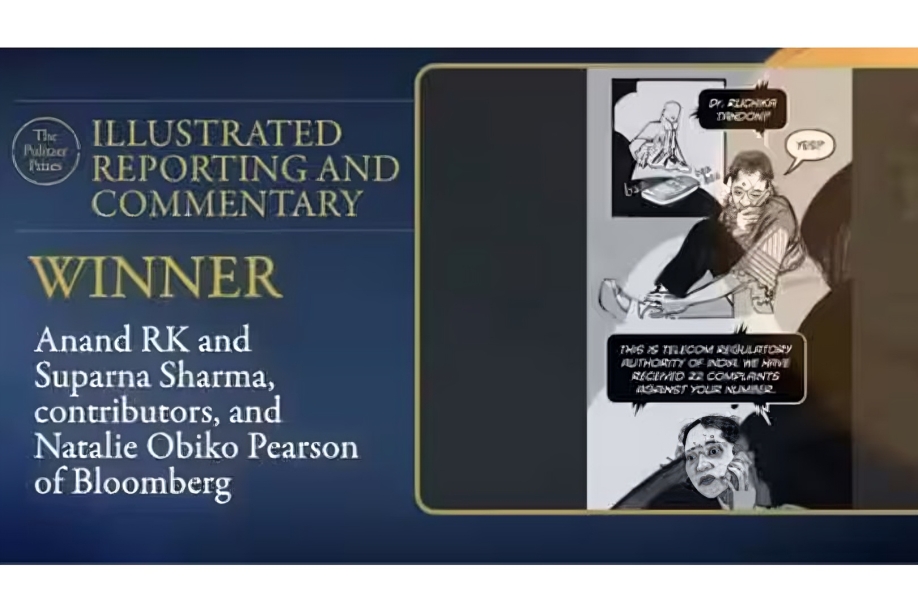

Researchers from AI Disclosures Project, a not-for-profit AI watchdog group established in 2024, have released a report concluding that OpenAI increasingly used paywalled books produced by O'Reilly Media to train its GPT-4o model.

GPT-4o, OpenAI’s more recent and capable model, demonstrates strong recognition of paywalled O’Reilly book content … compared to OpenAI’s earlier model GPT-3.5 Turbo. In contrast, GPT-3.5 Turbo shows greater relative recognition of publicly accessible O’Reilly book samples,” read the research paper.

"GPT-4o [most likely] knows, and therefore has had prior exposure to, many out-of-print O'Reilly books released before its training cut-off date," the authors of the research paper further noted. There is no content licensing agreement between OpenAI and O'Reilly Media, according to the research paper.

The new claims laid out in the study paper come as the Microsoft-funded AI firm is fighting a number of lawsuits brought by numerous parties claiming that its training data policies constitute copyright infringement.

In order to find out if copyrighted material had been used in the training sets utilized to create GPT-4o, the scientists employed a technique known as "membership inference attack" or DE-COP.

This method allows researchers to try and see if a large language model (LLM) will be able to consistently tell a human-written passage from a paraphrased version of the passage written by AI, based on a report filed by TechCrunch. If an LLM is able to tell the difference, then this implies that the AI model is somehow aware of the text due to its training data.

The researchers used GPT-4o, GPT-3.5 Turbo, and other OpenAI models in their research. They attempted to estimate the likelihood that a specific excerpt had been part of a model's training dataset based on 13,962 paragraph excerpts from 34 books published by O'Reilly Media.

Based on the findings, GPT-4o “recognised” more paywalled book content than GPT-3.5 Turbo and older OpenAI models. This was observed even after accounting for improvements in the capabilities of OpenAI’s newer models.

However, the paper notes limitations in the research methodology such as users feeding the paywalled book excerpts into ChatGPT as part of their prompts.

OpenAI and Google have urged the Trump administration to codify training AI models on copyrighted content under the fair use exception. OpenAI has also entered into licensing agreements with news publishers, social networks, stock media libraries, and others to acquire data for AI training. By: Tech Desk

OpenAI has been accused of probably training its GPT-4o model using paywalled content, without the publisher's consent.

Researchers at AI Disclosures Project, a non-profit AI watchdog group established in 2024, have released a study alleging that OpenAI more and more depended on paywalled books published by O'Reilly Media to train its GPT-4o model.

“GPT-4o, OpenAI’s more recent and capable model, demonstrates strong recognition of paywalled O’Reilly book content … compared to OpenAI’s earlier model GPT-3.5 Turbo. In contrast, GPT-3.5 Turbo shows greater relative recognition of publicly accessible O’Reilly book samples,” read the research paper.

"GPT-4o [presumably] knows, and thus has previous knowledge of, a lot of non-public O'Reilly books that were released before its training cutoff point," the authors of the research paper suggested. There is no content licensing agreement between OpenAI and O'Reilly Media, according to the research paper.

ChatGPT's new image generator is now available for everyone: Ghiblify your photos for free

Anupria Goenka remembers co-star becoming 'excited' during love-making scene; tells she felt 'violated, uncomfortable' when he touched her inappropriately

The Waqf Amendment Bill is a danger to a way of existence once heralded as 'unity in diversity'

The new allegations outlined in the research paper come at a time when the Microsoft-funded AI startup is fighting a number of lawsuits filed by numerous parties accusing it of copyright infringement in its practices to gather training data.

Why are thousands of artists, creatives resisting the UK govt's AI copyright proposal?

In order to identify if copyrighted material was incorporated in the training sets used to train GPT-4o, the researchers employed a technique referred to as "membership inference attack" or DE-COP.

This method allows researchers to test if an LLM is able to distinguish reliably between human-written texts and paraphrased, AI-generated versions of the same text, as per a report by TechCrunch. If an LLM is able to make the distinction, then it indicates that the AI model may have had prior knowledge of the text based on its training data.

The researchers were specific about GPT-4o, GPT-3.5 Turbo, and other OpenAI models for their research. They attempted to predict the likelihood that a specific excerpt had been part of a model's training corpus using 13,962 paragraph excerpts from 34 books published by O'Reilly Media.

Based on the findings, GPT-4o “recognised” more paywalled book content than GPT-3.5 Turbo and older OpenAI models. This was observed even after accounting for improvements in the capabilities of OpenAI’s newer models.

However, the paper notes limitations in the research methodology such as users feeding the paywalled book excerpts into ChatGPT as part of their prompts.

OpenAI and Google have lobbied the Trump administration to enact legislation for training AI models on copyrighted material under the fair use exception. In addition, OpenAI has also entered into licensing agreements with news publishers, social media platforms, and stock media libraries, among others, in order to get data for training purposes for AI. Moreover, it has apparently employed journalists to fine-tune its models' outputs.

For the Latest News, Visit: Frontlist

.png)

.jpg)

.jpg)

_(1).jpg)

.jpg)

.jpg)

.jpg)

.jpg)

.jpg)

Sorry! No comment found for this post.